A 20-year-old from Spring, Texas, named Daniel Alejandro Moreno-Gama has been charged with attempted murder after he was accused of throwing a Molotov cocktail at the gate of Sam Altman’s San Francisco home on April 10. He then allegedly walked toward OpenAI’s Mission Bay headquarters and told employees he intended to burn the building down as well. He was reportedly carrying a manifesto – a “three-part series,” according to Fox News – that included a list of other AI executives and investors and their home addresses and documents discussing potential risks that AI poses to humanity, with a section titled: “Some more words on the matter of our impending extinction.”

The documents also allegedly stated that “if I am going to advocate for others to kill and commit crimes, then I must lead by example and show that I am fully sincere in my message.” Moreno-Gama’s public defender has suggested he was having an “acute mental health crisis” at the time of the alleged attack.

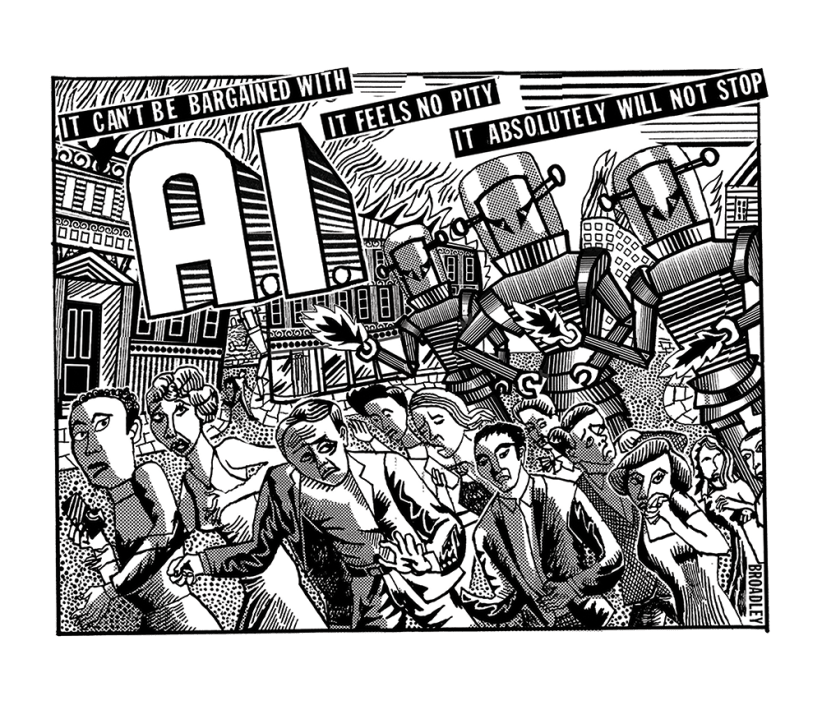

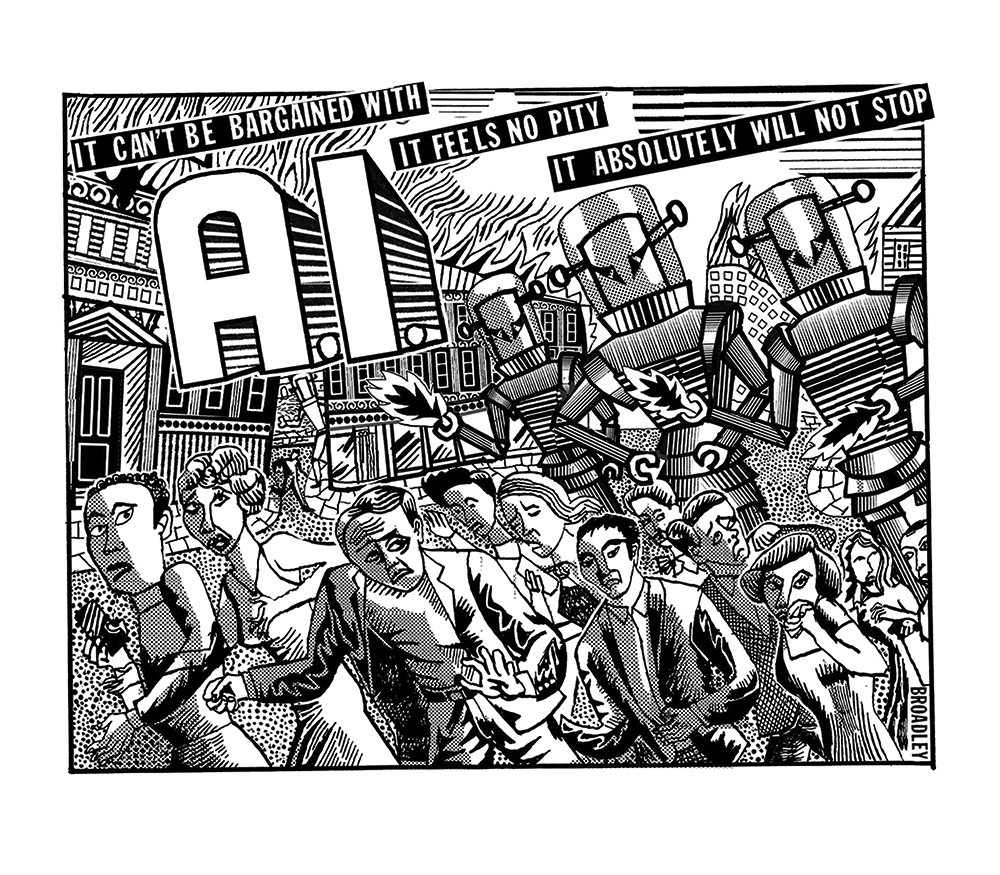

What we are watching is a pulse of anti-modernity running through a scattered series of violent incidents

Two nights later, a separate pair aged 23 and 25 were arrested after shots were fired near Altman’s house. OpenAI says the second incident was unrelated to Altman, which may be true – or it may be what you say when your CEO has been attacked twice in one weekend. The FBI has since raided a home in Spring connected to Moreno-Gama.

Moreno-Gama used the handle “Butlerian Jihadist” on Discord and Instagram, a term from Frank Herbert’s Dune, which borrows it from Samuel Butler’s 1872 Erewhon, where machines are outlawed because a philosopher argues they will inevitably surpass their makers. He was an active member of PauseAI’s public server, the loose activist network calling for a global moratorium on frontier AI development.

He kept a Substack for several months, publishing half a dozen posts with titles such as “A eulogy for man,” in which he characterized AI executives as psychopaths gambling with readers’ futures and their children’s lives, and described the arrival of superintelligence as a race to the grave.

In December last year, he posted on Discord: “We are close to midnight, it’s time to actually act.” A moderator warned him. He recommended Nate Soares and Eliezer Yudkowsky’s If Anyone Builds It, Everyone Dies to his Instagram followers.

The book argues, “without hyperbole,” that any group on Earth that builds artificial superintelligence using current techniques will cause the death of every person on the planet. According to its authors, AI engineers do not know what is inside these systems, cannot inspect them in any useful way, and thus cannot guarantee what the thing actually wants. Taken seriously, the book is a frightening piece of work. It has also been picked up outside its home context, where AI apocalypticism is as basic and expected as a goth’s white-powder foundation, and perhaps decontextualized as a manifesto.

Doomsday scenarios, unfortunately, have a tendency to mutate. Tell a depressed or unstable twenty-something – one who may have already been struggling with economic precarity – that the people running the AI labs are going to annihilate his future and that the window for stopping them is closing, and you have handed him a premise he can act on. Moreno-Gama’s own writing is Yudkowsky with every caveat deleted and every call for nonviolent political organization ignored. Not a warning transmission from Berkeley; a shot fired from Texas. I don’t believe this is “radicalization,” as some have argued, at least not in the conventional sense of the word. But making available – not handing, but not hiding, either – a loaded gun to an already-suicidal person is its own kind of problem, and it is not one that admits of an obvious fix. There are only superficially obvious ones.

Jasmine Sun at the Atlantic, one of my favorite writers on this subject, has given the phenomenon its best name: “AI populism,” which is when the public treat AI not as a technology, but as an elite conspiracy against them. I would offer one amendment. “AI populism” is more diffuse than any one ideology, and it has been simmering for longer than AI has been the subject. It’s the symptom of something much greater.

What we are watching is a pulse of anti-modernity running through a scattered series of violent incidents. Most recently: the Palm Springs fertility clinic bombing last May, a shooting in Indianapolis this month which targeted a politician who supported a data center, both Altman attacks. It expresses itself in whatever vocabulary happens to be available to the person pulling the trigger. Some call themselves efilists. Efile comes from “life” spelled backward; efilism is a fringe, antinatalist philosophy that advocates for the total extinction of all sentient life, this being the only way to end suffering.

Others call themselves Butlerian jihadists. Some leave notes that read “NO DATA CENTERS.” And some are still worried about climate change, that old frau most of us seem to have forgotten, still sweeping her floors in the background, tea going cold and cookies stale.

What is easy to miss, but worth marking, is that the communities these individuals claim tend to disown them or deny affiliation at all. I’d call it damage control if I hadn’t watched the efilists go through the same thing several times already.

These subcultures – ideologies, or whatever you want to call them – aren’t organized cells. This isn’t terrorism as we know it. They’re ideas that break containment among a population that already feels hopeless. Efilist forums (the subreddits, the Discord servers) condemned the Palm Springs bombing and the subreddit was purged almost immediately. PauseAI banned Moreno-Gama and said he had never been a formal member. Maybe I am naive, here. But I think there’s something to it – these aren’t the people you meet at organized events. These are the people who pick it up second-hand.

We are already as gods; the knowledge of this power has simply not been evenly distributed

More important than any of that, the grief underneath these acts is old and becoming more palpable. Yudkowsky didn’t give birth to Moreno-Gama; the world as it is did. I truly don’t believe you’ll find a murderer at Lighthaven, the Rationalist hub in Berkeley. You might find one lurking on LessWrong without an account – another tab open, maybe about climate change or Palestine or the economy or oil, anomie thick around him.

Whatever the case, progress continues its march. Technopessimists are trying to stop something that has, in most of the ways that matter, already happened. The futurist Ray Kurzweil was prescient about many things, and one of them is this: the merger has started. He predicted that the outer layers of our neocortex would be wired to the cloud by the 2030s, extending human thought the way the last round of neocortical expansion produced us. But think carefully about what consumer technology alone already does. (And that’s just consumer technology.) We are not “building” ourselves a second nervous system. We have built ourselves a second nervous system. We are already as gods, it’s just that the knowledge of this power hasn’t been evenly distributed yet.

The reason the violence will get worse, and also why it will fail, is that its protagonists are far too interested in the technology itself. Brian Merchant’s 2023 book Blood in the Machine is a sympathetic account of the origins of the Luddites, who were smarter and more strategic than the caricature allows. And they also lost. They weren’t anti-technology, they were anti-exploitation. Their enemy wasn’t the loom, but the factory owners. A better-organized movement aimed at owners rather than machines could have done better, and might still.

AI shouldn’t be used to exploit people and more economic sensitivity in that direction might even defuse some of the anger. But it is a different fight than the one most of the people reaching for Molotovs think they’re having. They aren’t asking the economic questions. Many of the extremists aren’t asking any questions at all – they are angry at modernity, at the very essence of technology.

But though we can change the terms, we cannot stop the arrival of progress. The fight for justice is worth having; the fight against progress is not. You cannot stop where our species is going, only how fast it gets there.

Technopessimists are trying to stop something that has, in most of the ways that matter, already happened

“Everyone dies” is not, from the perspective of most young Americans right now, the worst-case scenario. The worst case is that everyone lives and nothing you do matters and the job you trained for is gone and nobody will tell you why – and the billionaires have bunkers. The antinatalist who bombs an IVF clinic and the man who firebombs Altman’s house are answering the same question: what do you do when life has no meaning? What do you do when you feel like the future holds no place for you? I suspect, perhaps controversially, this is what the Columbine shooters Eric Harris and Dylan Klebold were trying, in their evil, to ask, what Sandy Hook killer Adam Lanza was trying to say in his disturbing internet posts – what every man who’s “gone postal” was trying to express.

Here is my prediction: violence will only widen the divide. Some will move toward Kurzweil. Others, Ted Kaczynski. The rest remain in purgatory, in neither one camp nor the other. The doomers will produce more Harrises, Klebolds and Lanzas. I want to shake them and tell them we’re already in tomorrow’s world.

In 2020, I said the real culture war was about technology. This has been true, arguably, since agriculture, since the alphabet, since reading, since the printing press, since the Industrial Revolution. The British journalist Mary Harrington has since argued that the singularity has already happened. She is right. The revolution you are waiting for is over, and you are, for better and for worse, one of its children.

Comments