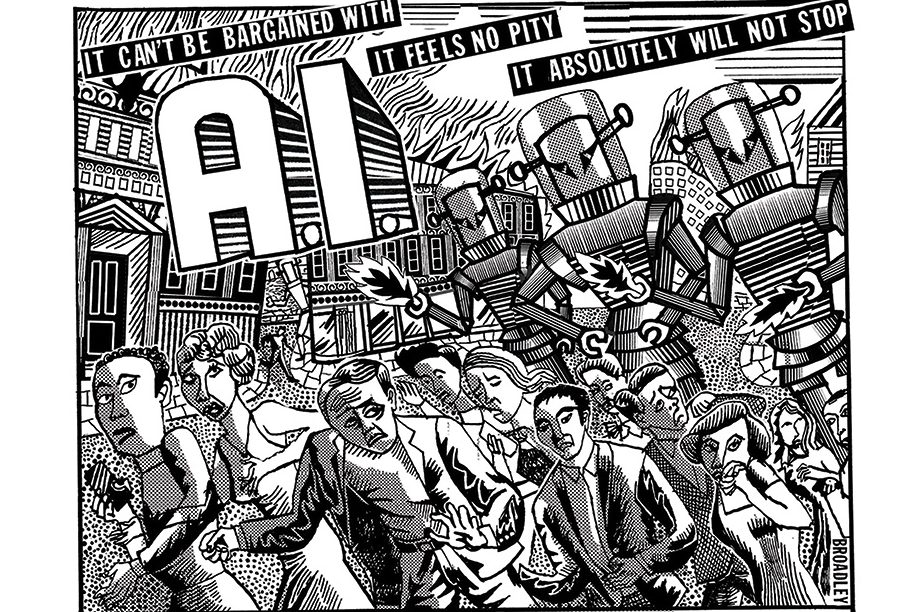

Cars ruined cities. Anyone can see that cities built before the invention of the automobile are incomparably more beautiful and serene than anything built after them. The contrast between Los Angeles and Prague is unmistakable. But people like things that move fast and make life easier, which means we’re stuck with the modern city hellscape whether we like it or not. And today, the same is true for AI.

The contrast between the internet five years ago and today is unmistakable: content-slop, workslop, AI-generated comments, fake opinions and phony judgments, trite phrases, apocalyptic hysteria, the biggest intellectual-property heist in human history – all because of the invention of Large Language Models (LLMs). But again – it doesn’t matter how we feel because the speed and ease of chatbots make them unstoppable. We’re stuck living in the hellscape of modern digital culture whether we like it or not.

The problem of how to make sure these mediums don’t drive us insane has become our defining challenge

At a certain point, people do become aware of safety issues with new tech – and that’s when those in charge of protecting the value of the industry start offering solutions. For cars, they invented seatbelts, speed limits and penalties for drunk driving. For AI, they’ve invented something called “guardrails.” This apparently means somewhere within the technology there are barriers to stop you doing stupid things. It’s a great idea in theory. And it’s true that seatbelts did reduce car-crash fatalities, so they’re not completely wrong. The problem is that Los Angeles is still a dump, even with seatbelts. And AI will still destroy our minds.

The buzzword in AI is “alignment.” That means: how do we ensure this technology isn’t going to cause deaths or drive people insane? We’ve already started to see it deplete human genius. AI is everywhere. I can’t even use Microsoft Word anymore without Copilot harassing me to create a summary. Why? Did Shakespeare need Copilot? Did Nabokov? James Joyce literally went blind from an STD and he still managed to compose Ulysses. Homer was one of the greatest storytellers in human history and he didn’t even use a pen, let alone Copilot, because he remembered it all in his head.

But now because the entire American economy relies on the promised “trillions of dollars in value” being unlocked by LLM-driven productivity growth, we all have to “need help” and there’s no clear way out. That means that AI is integrated into every corner of our lives, which are now so absolutely intertwined with software and screens that it’s basically impossible to connect with a human anymore without going through them.

And this means that “alignment” – the problem of how to make sure these mediums don’t drive us insane or make us all kill ourselves – has become the defining challenge of our generation.

The problem with alignment, though, is that unlike seatbelts in cars, there is no clear way of stopping people from going mad with the technology or using it to replace thinking, or “cognitive offloading.” Many modern diseases can be solved with healthy eating and daily exercise, too, but still the vast majority of Americans would rather watch five hours of TV a day and eat McDonald’s. Then they die of heart disease or diabetes. The same is true for AI.

Before LLMs, society was similarly lobotomized by the spread of social media. Unlike social media, though, today’s AI companies now promise consumers that there are moral guardrails inside the models. That’s how they figure out the alignment problem. Sometimes it comes out that they’re lying, and no such guardrails exist. Also, it turns out that the CEOs of the social media companies are now also the CEOs of the AI companies. That doesn’t seem right, but what do I know? I’m not a chatbot.

The real problem is that we still don’t actually have a full idea of how these LLMs work. The most recent example is from the Network Contagion Research Institute at the University of Miami. It’s a small study and the conclusions are tentative, but extremely concerning. The team fed ChatGPT different forms of written political content and tested its responses to a user prompt after each form it consumed.

ChatGPT stays relatively constant in terms of most psychological variables, with one enormous exception. If you show it extreme political content, it resonates with it and mimics the narrative back to you, essentially radicalizing its own output through this kind of exposure. It seems that LLMs mirror authoritarian content most of all.

What’s even crazier is that if you feed it pictures of neutral faces before this content, the LLM will tell you, correctly, that they’re neutral. But if you give it the same images after exposure to extreme political content, it will attribute significantly more hostility and aggression to those same faces. That means we’re integrating these models with our shared reality, but the internal vision of the models themselves is optimized for context in technologically unprecedented ways. They start attributing hostility to completely neutral faces with the right priming – just as humans do.

This study suggests that the LLMs have the potential to reinforce a closed loop of extreme political views, ones which quietly cascade based on the narrative inputs of their users. And as these systems expand throughout the internet, humanity risks gradually drifting off into the kind of utterly irreconcilable narratives about the world that social media polarization has already brought us. This time, though, to each user their own model – and their own little solipsistic universe. That’s really bad.

OpenAI even responded to the report and said that ChatGPT is “built to follow user instructions within our safety guardrails.” Maybe it really is just time to go for a drive.

Comments